Exploring Prompt Injection Attacks, NCC Group Research Blog

Por um escritor misterioso

Last updated 24 abril 2025

Have you ever heard about Prompt Injection Attacks[1]? Prompt Injection is a new vulnerability that is affecting some AI/ML models and, in particular, certain types of language models using prompt-based learning. This vulnerability was initially reported to OpenAI by Jon Cefalu (May 2022)[2] but it was kept in a responsible disclosure status until it was…

Prompt Injection: A Critical Vulnerability in the GPT-3

Electronics, Free Full-Text

The ELI5 Guide to Prompt Injection: Techniques, Prevention Methods

The ELI5 Guide to Prompt Injection: Techniques, Prevention Methods

The Bug Bounty Hunter – Telegram

The ELI5 Guide to Prompt Injection: Techniques, Prevention Methods

🟢 Prompt Injection Learn Prompting: Your Guide to Communicating

👉🏼 Gerald Auger, Ph.D. على LinkedIn: #chatgpt #hackers #defcon

LLM Prompt Injection Attacks & Testing Vulnerabilities With

Recomendado para você

-

This is a cheat engine that works on Android. : r/ReverseEngineering24 abril 2025

This is a cheat engine that works on Android. : r/ReverseEngineering24 abril 2025 -

You can't use Cheat Engine to speed up your game anymore! : r/RotMG24 abril 2025

You can't use Cheat Engine to speed up your game anymore! : r/RotMG24 abril 2025 -

reddit-emacs-tips-n-tricks/out.md at master · LaurenceWarne/reddit-emacs-tips-n-tricks · GitHub24 abril 2025

-

GitHub - Lissy93/awesome-privacy: 🦄 A curated list of privacy & security-focused software and services24 abril 2025

-

![VirtualXposed for GameGuardian APK [No Root] » VirtualXposed](https://virtualxposed.com/wp-content/uploads/2019/02/virtualxposed-for-gameguardian-apk-download-1024x576.png) VirtualXposed for GameGuardian APK [No Root] » VirtualXposed24 abril 2025

VirtualXposed for GameGuardian APK [No Root] » VirtualXposed24 abril 2025 -

What's On Your Bank Card? Hacker Tool Teaches All About NFC And RFID24 abril 2025

What's On Your Bank Card? Hacker Tool Teaches All About NFC And RFID24 abril 2025 -

So you installed ChromeOS Flex, Now what?, by David Field24 abril 2025

So you installed ChromeOS Flex, Now what?, by David Field24 abril 2025 -

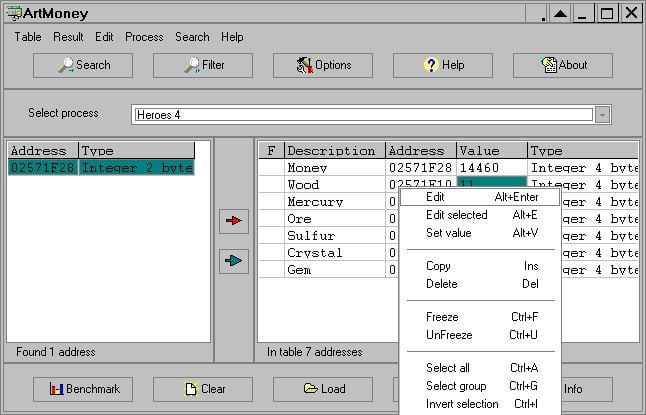

10 Best Cheat Engine Alternatives: Top Game Cheating Tools in 202324 abril 2025

10 Best Cheat Engine Alternatives: Top Game Cheating Tools in 202324 abril 2025 -

9sdavasd by bqvsddff - Issuu24 abril 2025

9sdavasd by bqvsddff - Issuu24 abril 2025 -

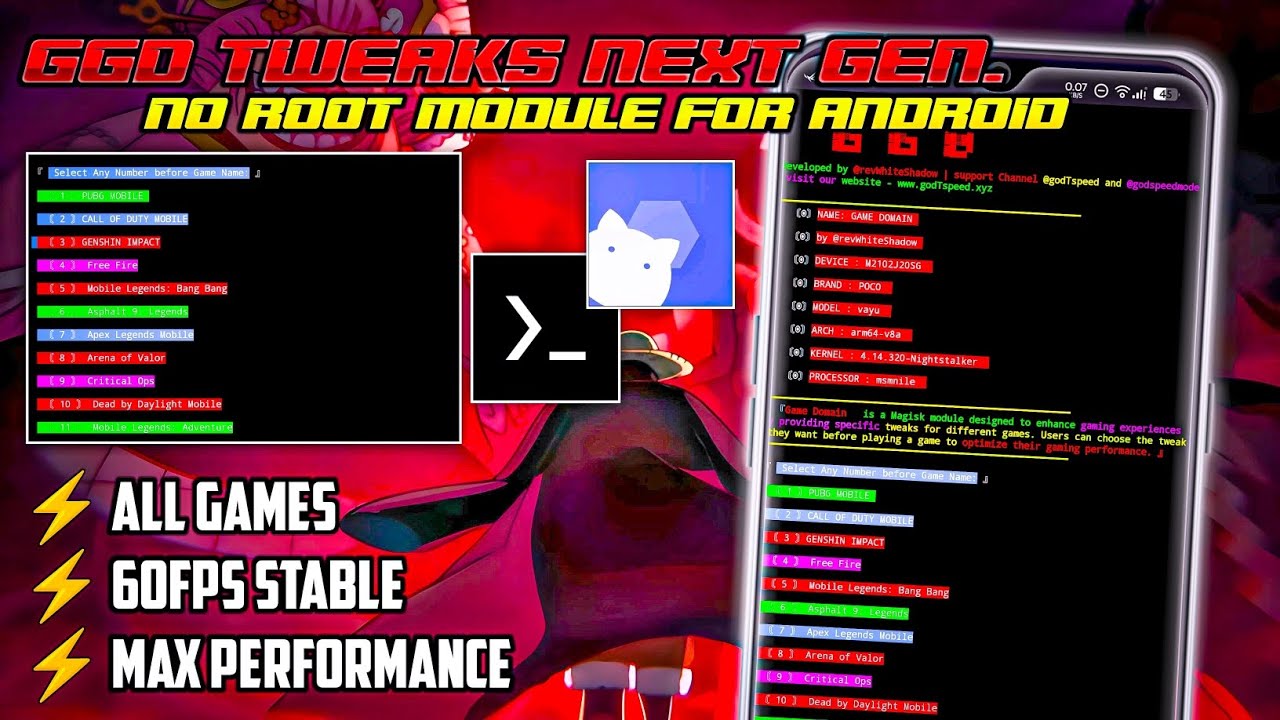

Game Engine! NO ROOT Tweaks Unlock 60-120FPS🔥⚡24 abril 2025

Game Engine! NO ROOT Tweaks Unlock 60-120FPS🔥⚡24 abril 2025

você pode gostar

-

Wait, This Soul Eater Roblox Game is FREAKING AWESOME! (Soul Eater24 abril 2025

Wait, This Soul Eater Roblox Game is FREAKING AWESOME! (Soul Eater24 abril 2025 -

Eterno 831 (2022) Eternal 831 HBOMAX24 abril 2025

Eterno 831 (2022) Eternal 831 HBOMAX24 abril 2025 -

Assistir Dungeon ni Deai wo Motomeru no wa Machigatteiru Darou ka 4 Online completo24 abril 2025

Assistir Dungeon ni Deai wo Motomeru no wa Machigatteiru Darou ka 4 Online completo24 abril 2025 -

wanna be nerd: Elementos, de Peter Sohn24 abril 2025

wanna be nerd: Elementos, de Peter Sohn24 abril 2025 -

Cadeira De Salão Cabeleireiro, Maquiagem, Barbeiro Rosa em24 abril 2025

Cadeira De Salão Cabeleireiro, Maquiagem, Barbeiro Rosa em24 abril 2025 -

Player 1 Video Games24 abril 2025

Player 1 Video Games24 abril 2025 -

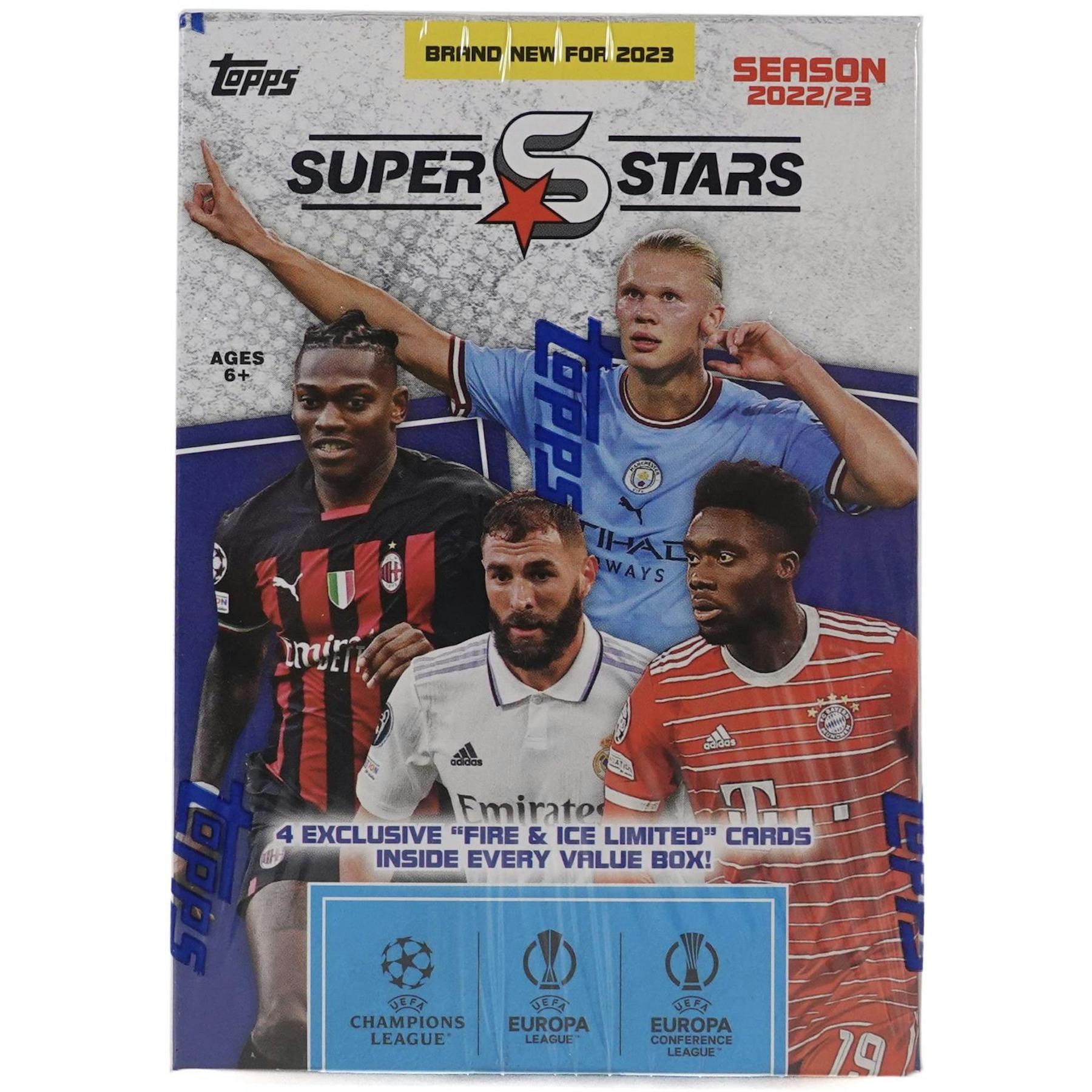

2022/23 Topps UEFA Superstars Soccer 9-Pack Blaster Box24 abril 2025

2022/23 Topps UEFA Superstars Soccer 9-Pack Blaster Box24 abril 2025 -

![Kubo-san wa Mob wo Yurusanai - 01 [First Look] - Anime Evo](http://www.anime-evo.net/wp-content/uploads/2023/01/KuboY_01_1.jpg) Kubo-san wa Mob wo Yurusanai - 01 [First Look] - Anime Evo24 abril 2025

Kubo-san wa Mob wo Yurusanai - 01 [First Look] - Anime Evo24 abril 2025 -

HBO Shop24 abril 2025

HBO Shop24 abril 2025 -

Kojima's Xbox Exclusive Is Called OD And Involves Jordan Peele24 abril 2025